The AI chatbot plays a vital role in e-commerce. To improve user interaction efficient memory management is required. Here’s how we can enhance this process.

The Embeddings and History messages are the two main factors in memory management

Embeddings in e-commerce AI Chatbot

The numerical representation of words is vector embeddings. They contain meaning and context and allow data similar to the user’s query.

For example, the user might ask an e-commerce AI chatbot, “Specifically, do you have blue jeans?”

First, it converts text to embeddings. Then, it finds similar products using a similarity search in a vector database where our product embeddings are already stored.

So we get similar products through embeddings and this data is sent to an AI Chatbot.

AI chatbot: Yes, we have blue jeans. Here are the details…. .

That’s why embeddings are important in AI chatbots.

Methods to create Embeddings

There are many ways to create contextual embeddings

- TF-IDF

- Word2vec

- Glove

- BERT

- Transformers, etc

In recent times, transformer-based embedding has been the best as compared to others.

When we use transformers to create embeddings, it requires a decent CPU and RAM. Suppose we have to make 1 lakh product embeddings, so it increases the CPU and memory Usage.

Suppose 20k users are queried at the same time then this also increases the load while creating embeddings. The embedding creation task will be slow

Here is the solution, Using Hosted Embeddings APIs.

Hosted Embeddings APIs

Here are some hosted APIs:

- openai: provide text-embedding-3-small, text-embedding-3-large and text-embedding-ada-002 models for creating embeddings.

- Gemini: provide text-embedding-004 embedding model.

Advantages of Using Embedding APIs

These are pre-trained embedding models APIs. It saves time compared to developing local embedding solutions. Developers can then concentrate on improving the chatbot’s features.

History Messages in e-commerce AI Chatbot

History messages are also part of the AI chatbot which helps users to chat continuously. These chats took up space of memory.

We can use session a based approach, it is easy to implement and fast but leads to data loss and limited context.

Best Practices for Memory Management

These are some basic practices for memory management:

- Hosted API: Selecting the right hosted model API, as a result, embedding creation load reduced.

- Embedding Size: The embedding size should contain more context meaning with minimum size for efficient memory usage.

- Vector DataBase: Select a memory-efficient vector database like chromadb.

- Database-based storage: Use database storage to manage proper chat history and strategies to manage history efficiently.

- Message Capping: E.g., selecting the last 10 messages, and Dynamic Trimming – removing older messages and selecting the right storage.

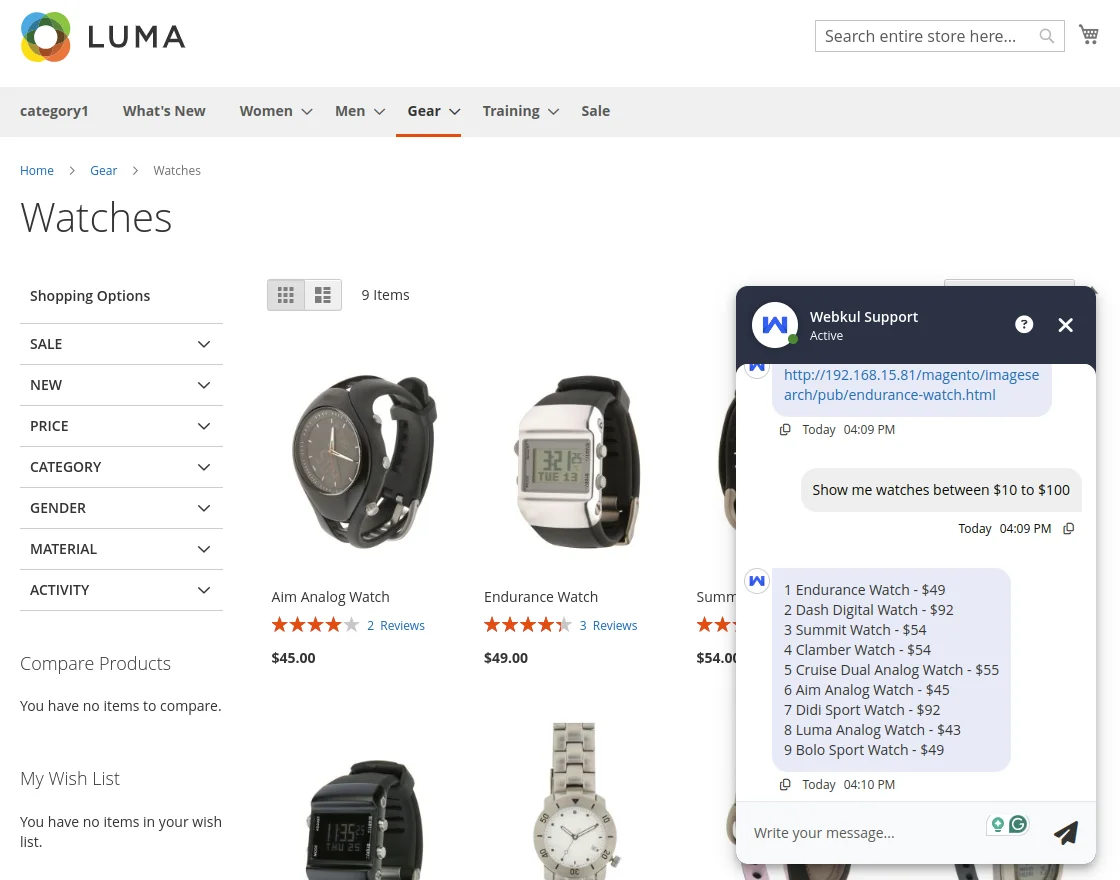

Checkout our latest e-commerce AI Chatbot

This is our Magento 2 AI chatbot, which works with dynamic embedding APIs and is memory efficient.

Conclusion

Effective memory management in e-commerce AI chatbots is providing outstanding user experiences. By adopting these strategies, businesses can optimize their processes, scalability and efficiency.

Be the first to comment.