The development of AI coding agents has progressed at a very rapid pace, yet all the time they still had one huge limitation in that they still required being supervised consistently.

The majority of agents were locally executed, in a step-by-step fashion that required your focus at every step.

They were amiable, but nowhere near as genuinely independent.

Mistral AI is revolutionizing the game with Mistral Medium 3.5 particularly when integrated with Vibe remote agents and Work Mode in Le Chat.

Artifical Intelligence is no longer something that you have in constant contact with, but something that is actually working on your behalf in the background.

You delegate the work, relinquish it and return to work that is finished.

Mistral Medium 3.5 — A Unified Model

Mistral Medium 3.5 is a robust 128B dense model which integrates various functions into one system. In the past, you required various models of chat, reasoning, and coding.

At this point, a combination of weights takes care of everything.

It replaces:

- Mistral Medium 3.1

- Magistral (chat)

- Devstral 2 (coding agents)

This standardization allows greater consistency and eliminates the small context differences that occurred when changing between the specialized models.

Constructed to do Long, Complex Tasks.

The majority of the LLMs are also geared towards fast chats. However, debugging large codebases, research, refactoring, are all multi-step processes that require persistence.

Mistral Medium 3.5 is created specifically to this:

- 256k context window – can easily work with whole codebases.

- Controllable reasoning effort – control the depth of its thinking on each task.

- Predictable long-term execution – remains predictable even when used over long periods.

This renders it much better with agentic workflows than with the traditional chat models.

Core Capabilities of Mistral Medium 3.5

The model is very versatile. Key strengths include:

- Excellent instruction following

- Avid deep thinking (math, logic, multi-step problems)

- High-quality code generation

- Native function calling

- Consistent structured output (JSON, other formats accessible to tools)

- Multimedia aid (text + pictures).

The vision features are realistic and cope with the real-world changes in the images.

Why This Model Matters

The most striking aspect of the Mistral Medium 3.5 is its stability when doing long tasks.

Most of the existing models perform effectively when the tool is applied to single responses but fails when the tools are chained, tasks are long and outputs are required to feed into other systems.

This is the model that was developed to deal with just such scenarios.

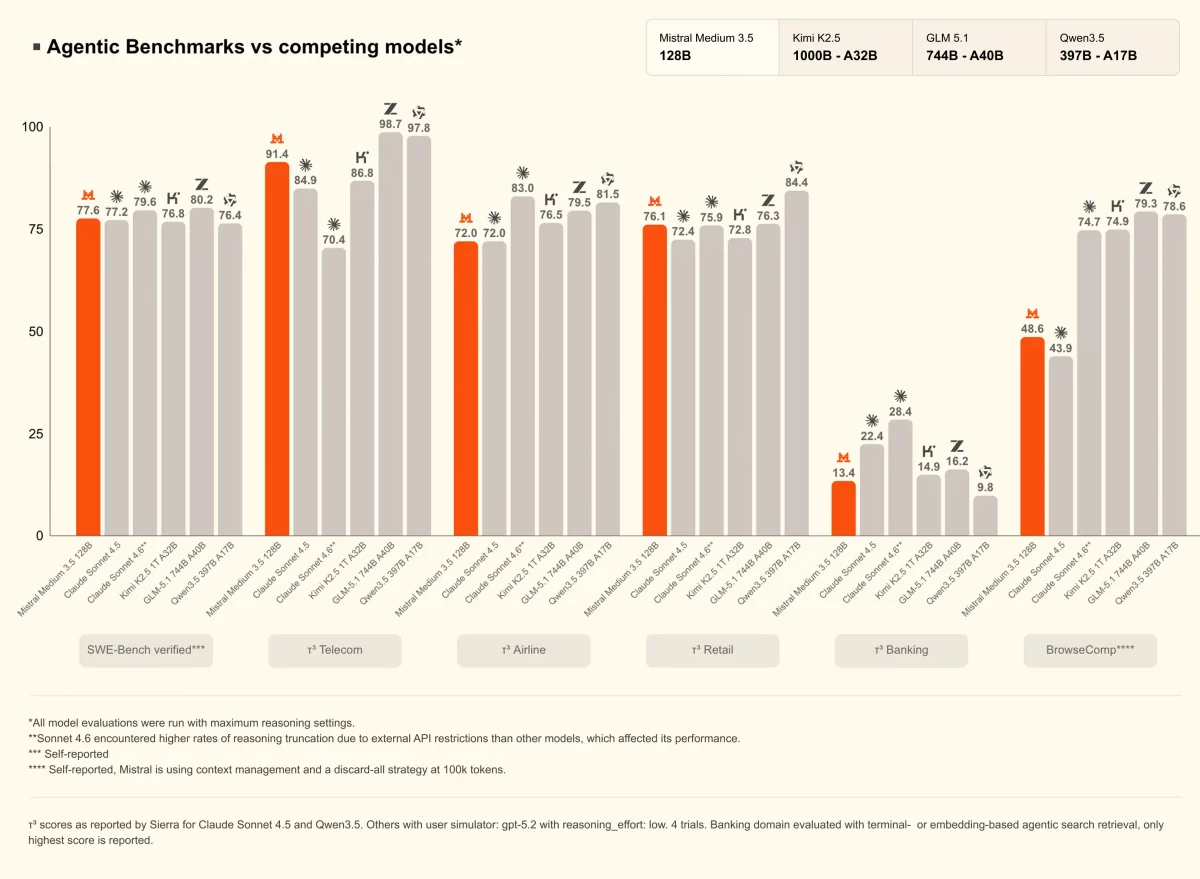

Benchmarks (Real-World Signal):

Image Source : mistral.ai/news

- 77.6 on SWE-Bench Verified (software engineering)

- 91.4 on τ 3 -Telecom (agentic workflows)

It evidently is much better than earlier Mistral coding models and has completely supplanted Devstral in the Vibe system.

Remote Coding Agents with Vibe : The Game Changer

This is the point where the exciting things take place.

Vibe by Mistral takes the coding agents to the cloud.

They operate asynchronously – they keep running even when you are offline or otherwise busy.

Typical workflow:

- Initiate a task through CLI or chat.

- Agent works in the cloud environment

- Automatic tracking of progress is followed.

- You receive the end product (usually as a clean PR)

No longer need to babysit each and every step.

Best Use Cases

These asynchronous agents are better than the structured tasks that are time-consuming:

- Large-scale code refactoring

- Test suite generation

- Bug fixing

- Dependency updates

- CI/CD pipeline debugging

You do not just receive anymore AI that assists you in coding.

You are getting AI that will do the coding job on your behalf.

Availability of Mistral Medium 3.5

Mistral Medium 3.5 available as :

- Vibe → Async code agents.

- Le Chat → Workflows and interaction

- API → Custom integrations

It is also open weight (modified MIT license) and can be self-hosted and works best with vLLM and SGLang.

Conclusion

Mistral Medium 3.5 is not just another model upgrade but rather a fundamental change in our approach to using AI.

We are progressing beyond step-by-step prompting into task assigning and reviewing of outcomes.

The Stack:

- Model → Mistral Medium 3.5

- Execution → Vibe agents

- Interface → Le Chat Work Mode

The combination of AI as a tool of assistance, and AI as a tool of autonomous labor, makes AI a useful tool capable of being an autonomous worker.

Want to Build AI-powered solutions visit Webkul!

Be the first to comment.