Qwen 3.5 (397B) is a newly released and very powerful open-source multimodal model.

With strong benchmark results and managed access, developers can use it without worrying about infrastructure.

What makes Qwen 3.5 different

Qwen 3.5 is not just bigger. It changes the design and training so multimodal ability is built in, not added later.

Its most notable transformation is that it has an integrated vision-language foundation.

The model is trained by combining text and image data early, instead of training them separately.

This helps it better understand text, images, and data, improving reasoning and coding.

Another upgrade is its hybrid design using Gated Delta Networks and a sparse Mixture-of-Experts system.

In short, this means:

It is a very small subset of the network that is activated by any query.

The smarts of a 397 B model are still there.

The inference is much quicker and cheaper than dense, similar scale, models.

That is why the model is surprisingly responsive despite its size.

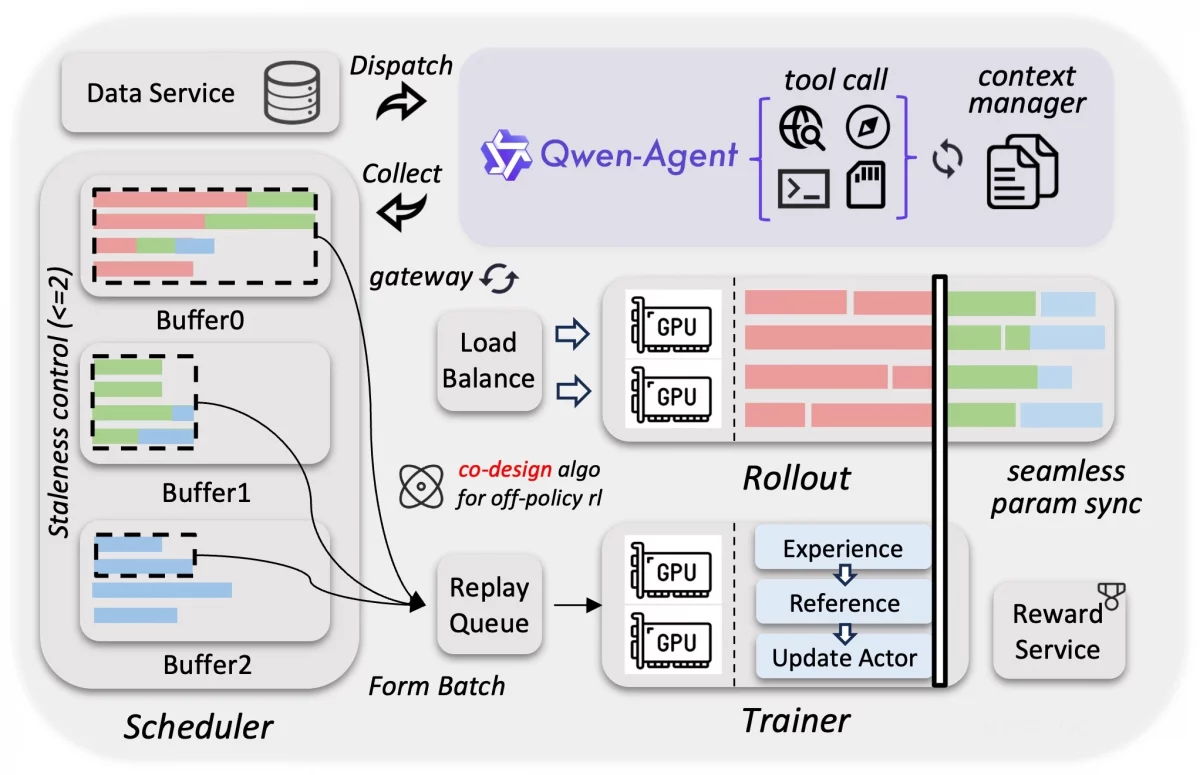

Qwen 3.5 Constructed for Agent Environment

Qwen 3.5 pays much attention to massive reinforcement learning.

The model was trained to handle real-world tasks and multi-step problems

The model supports 201 languages and understands regional and mixed languages better.

Efficiency in Training on an Odd Scale

The multimodal workloads are tuned on the training stack.

The team trained it efficiently using reinforcement learning in simulations.

This setup allows the model to scale without slowing down, unlike typical multimodal training.

Image Source : [email protected]

Architecture of Qwen 3.5

In essence, Qwen 3.5 is a causal language model that has a vision encoder.

It is trained in two phases of pre- and post-training alignment.

Here are the main technical features :

Parameters: 397 B (approximately 17 B are active with each request, courtesy of MoE routing).

Layers: It has 60 deep layers with attention blocks and expert routing blocks.

Experts: There are 512 experts, and about 10 are used for each query.

Context Length: 262 K tokens natively; can be extended to more than 1 million tokens in long context tasks.

Hidden Dimension: 4096, which allows dense reasoning representations.

Attention Design: It combines routed attention and gated linear attention to balance memory use and speed.

Training Technique: MTP lets the model predict several tokens at the same time.

The model is large, but its design keeps computing costs under control.

Even with efficient routing, Qwen 3.5 is large and needs powerful hardware.

That’s why managed APIs are useful—they let developers try the model without deploying it themselves.

Why Qwen 3.5 Matters

Qwen3.5 is an indication of a larger movement in the evolution of Artifical Inteliigence.

Multimodality is turning into a fundamental and not an auxiliary.

Massive models are being made available by sparse expert architectures.

Long-context reasoning is entering into the million-token space.

For developers, this means you can build with a system like top proprietary models without setting up the infrastructure.

Conclusion

Qwen 3.5 is not just about having a massive number of parameters. Instead, it represents a shift in how modern AI models are designed and trained.

By combining advanced techniques such as multimodal reasoning and large-scale reinforcement learning, the model is able to understand and process different types of information more effectively.

Additionally, its architecture focuses on efficiency and scalability, allowing it to handle complex tasks while keeping computational costs manageable.

As a result, Qwen 3.5 is not simply a larger model, but a more capable and adaptable one.

Overall, this model reflects the direction in which AI systems are evolving—toward more integrated, efficient, and intelligent systems that can reason across different types of data.

Want to Build AI-powered solutions visit Webkul!

Be the first to comment.